The architecture, in one line

In GenAIoT, the “model” is not the system. The system is model + context + tools + controls.

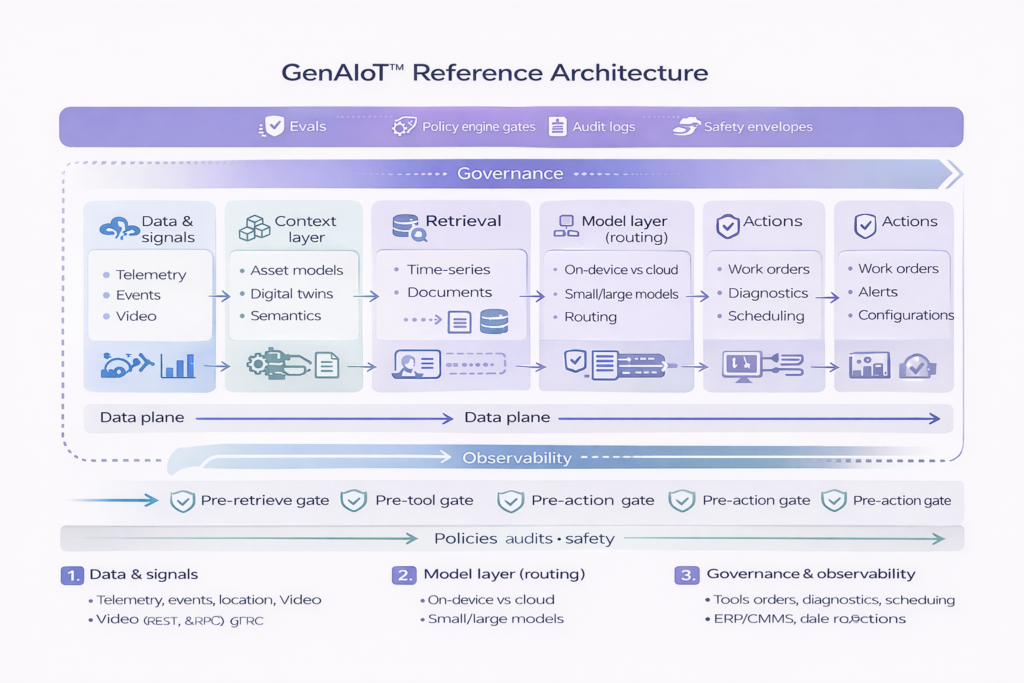

GenAIoT systems connect real-world signals to real-world outcomes — safely — by combining edge and cloud intelligence with a context layer, retrieval, governed tool use, and end-to-end observability.

The architecture, in one line

The 6 MVP building blocks

01.

Data & signals

This is the system’s “ground truth” — high-volume, high-variance inputs such as:

- Telemetry and events (time-series, logs, alarms)

- Video and audio streams (where applicable)

- Location, environment, and network state

- Enterprise operational signals (tickets, work orders, parts, schedules)

02.

Context layer

Context turns data into meaning. This layer provides structure and constraints, such as:

- Asset models (identity, hierarchy, relationships, topology)

- Digital twins (state + constraints over time)

- Semantics/ontology (consistent vocabulary across systems)

- Feature store and derived signals (shared, reusable inputs)

03.

Retrieval (time-series + knowledge)

GenAIoT relies on retrieval to ground outputs and reduce hallucinations:

- Time-series retrieval: baselines, historical windows, similar incidents, correlated signals

- Document retrieval: manuals, SOPs, tickets, runbooks, safety policies, vendor notes

- Hybrid retrieval: merge telemetry context with text sources and rank by relevance/recency

04.

Model layer (routing + deployment posture)

Different tasks require different models and placements:

- Routing: select models by latency, cost, privacy, and reliability targets

- On-device vs cloud: edge inference for low latency/resilience; cloud for heavy reasoning

- Small vs large models: smaller models for classification/extraction; larger for synthesis/planning

- Guarded generation: constrained outputs, deterministic checks, and evaluation gates

05.

Tools/agents (execution and orchestration)

This is where GenAIoT moves from insight to operational impact:

- Diagnostics and guided troubleshooting

- Work order creation and triage (ITSM / CMMS)

- Scheduling, dispatch, parts checks, escalation workflows

- Safe configuration changes (where permitted)

- Integrations with ERP/CMMS/SCADA/field apps

06.

Governance & observability

This is what makes GenAIoT deployable at scale:

- Evals & testing: golden sets, scenario tests, regression, red-teaming

- Audit logs: who/what/when/why + source citations + tool calls + outcomes

- Safety envelopes: allowed actions, thresholds, approvals, rollback conditions

- Prompt/tool governance: prompt registry, tool permissions (RBAC/ABAC), rate limits

- Monitoring: drift, hallucination indicators, failure modes, cost, latency, reliability

Data plane vs Control plane

Data plane

Signals + context + retrieval that feed reasoning and recommendations.

Control plane

Policies + approvals + audits + evals that constrain and validate actions.

Most failures happen when teams build the data plane first and attempt to “bolt on” governance later.

Deployment patterns (MVP list)

Pattern A: RAG Copilot for Operations

Use when: you need faster troubleshooting and higher-quality decisions.

Inputs: telemetry + tickets + manuals

Output: grounded explanations + recommended next steps with citations

Pattern B: Guided Automation (Human-in-the-loop)

Use when: actions impact reliability, safety, or cost.

Tools: work orders, scheduling, diagnostics

Controls: approvals + policy gates + rollback

Pattern C: Edge-first Intelligence

Use when: latency, privacy, or resilience dominates.

Edge: local inference + local context

Cloud: periodic learning, heavier analysis, fleet-wide insights

Quick checklist for teams adopting the reference architecture

- Do we have an asset model or twin to anchor “context”?

- Can we retrieve both time-series history and operational documents?

- Do we route models by task/latency/cost rather than “one model everywhere”?

- Are tool calls gated by policy and approvals with rollback?

- Do we log provenance and outcomes end-to-end?

- Do we have evals that reflect real operating scenarios?